Frequently Asked Questions

Product Overview & Use Cases

What is FalkorDB and what does it do?

FalkorDB is a high-performance graph database designed to manage complex relationships and enable advanced AI applications. It is purpose-built for development teams working with interconnected data in real-time or interactive environments. Key use cases include Text2SQL, Security Graphs, GraphRAG, Agentic AI & Chatbots, Fraud Detection, and high-performance graph storage for complex relationships. Learn more.

What are the main use cases for FalkorDB?

FalkorDB is used for Text2SQL (natural language to SQL on complex schemas), building Security Graphs for CNAPP, CSPM & CIEM, advanced GraphRAG retrieval, powering Agentic AI and chatbots, enabling real-time fraud detection, and serving as a high-performance graph database for complex relationships. See use cases.

Who is FalkorDB designed for?

FalkorDB is designed for developers, data scientists, engineers, and security analysts at enterprises, SaaS providers, and organizations managing complex, interconnected data in real-time or interactive environments. Get a demo.

What industries use FalkorDB?

Industries represented in FalkorDB case studies include Healthcare (AdaptX), Media and Entertainment (XR.Voyage), and Artificial Intelligence/Ethical AI Development (Virtuous AI). See case studies.

How does FalkorDB support enterprise AI workflows?

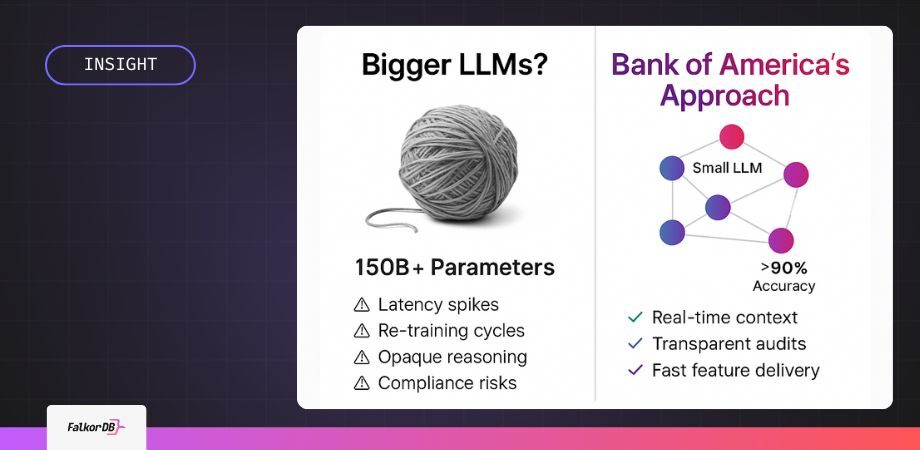

FalkorDB supports enterprise AI workflows by providing low-latency, high-accuracy graph-based retrieval (GraphRAG), stateful context management, transparent data lineage for compliance, and rapid feature deployment without costly retraining cycles. This architecture is ideal for applications like Bank of America's Erica, which require precision, speed, and auditability. Read more.

Features & Capabilities

What are the key features of FalkorDB?

Key features include ultra-low latency (up to 496x faster than competitors), 6x better memory efficiency, support for 10,000+ multi-graphs (multi-tenancy), open-source licensing, linear scalability, advanced AI integration (GraphRAG, agent memory), cloud and on-prem deployment, and enhanced dashboards for interactive analysis. Learn more.

Does FalkorDB support multi-tenancy?

Yes, FalkorDB supports multi-tenancy in all plans, enabling management of over 10,000 multi-graphs. This is especially valuable for SaaS providers and organizations with diverse user bases. Details here.

How does FalkorDB optimize for AI applications?

FalkorDB is optimized for AI use cases such as GraphRAG and agent memory, combining graph traversal with vector search for personalized, real-time adaptability in intelligent agents and chatbots. It enables structured, explicit context delivery for LLM pipelines, reducing latency and improving accuracy. See GraphRAG SDK.

What integrations does FalkorDB offer?

FalkorDB integrates with frameworks such as Graphiti (by ZEP), g.v() for visualization, Cognee, LangChain, and LlamaIndex for LLM integration. These integrations enable advanced AI agent memory, knowledge graph visualization, and natural language interfaces. See integrations.

Does FalkorDB provide an API?

Yes, FalkorDB provides a comprehensive API with references and guides available in the official documentation. This supports developers, data scientists, and engineers in integrating FalkorDB into their workflows.

Is FalkorDB open source?

Yes, FalkorDB is open source, encouraging community collaboration and transparency. This differentiates it from proprietary solutions like AWS Neptune. Learn more.

What technical documentation is available for FalkorDB?

FalkorDB provides comprehensive technical documentation and API references at docs.falkordb.com and release notes on the GitHub Releases Page. These resources cover setup, advanced configurations, and integration guides.

Performance & Scalability

How does FalkorDB perform compared to other graph databases?

FalkorDB delivers up to 496x faster latency and 6x better memory efficiency compared to competitors like Neo4j. It supports over 10,000 multi-graphs and offers flexible horizontal scaling, making it ideal for real-time, large-scale data analysis. See benchmarks.

What makes FalkorDB suitable for real-time AI applications?

FalkorDB's ultra-low latency, in-memory storage model, and optimized graph traversal make it ideal for real-time AI applications, such as chatbots and intelligent agents, where speed and adaptability are critical.

How scalable is FalkorDB?

FalkorDB supports linear scalability and flexible horizontal scaling, efficiently managing large-scale, high-dimensional data. It can handle over 10,000 multi-graphs, making it suitable for enterprises and SaaS providers.

What are the memory efficiency benefits of FalkorDB?

FalkorDB provides 6x better memory efficiency than competitors, enabling efficient handling of large, complex datasets without excessive resource consumption.

Security & Compliance

Is FalkorDB SOC 2 Type II compliant?

Yes, FalkorDB is SOC 2 Type II compliant, meeting rigorous standards for security, availability, processing integrity, confidentiality, and privacy. Learn more.

How does FalkorDB help with regulatory compliance?

FalkorDB's GraphRAG-SDK helps organizations stay ahead of financial regulations by mapping regulations to workflows, identifying compliance gaps, and providing actionable recommendations. Transparent data lineage supports audit and regulatory requirements.

What security features does FalkorDB offer?

FalkorDB offers robust security features, including protection against unauthorized access, operational availability, accurate and timely data processing, confidentiality safeguards, and privacy compliance. These are validated by SOC 2 Type II certification.

Pricing & Plans

What pricing plans does FalkorDB offer?

FalkorDB offers four main pricing plans: FREE (for MVPs with community support), STARTUP (from /1GB/month, includes TLS and automated backups), PRO (from 0/8GB/month, includes cluster deployment and high availability), and ENTERPRISE (custom pricing, includes VPC, custom backups, and 24/7 support). See pricing.

What features are included in the FREE plan?

The FREE plan is designed for building a powerful MVP and includes community support. It is ideal for teams starting out and evaluating FalkorDB's capabilities.

What does the STARTUP plan cost and include?

The STARTUP plan starts at per 1GB per month and includes TLS encryption and automated backups, making it suitable for growing teams needing additional security and reliability.

What does the PRO plan cost and include?

The PRO plan starts at 0 per 8GB per month and includes advanced features such as cluster deployment and high availability, targeting organizations with higher performance and reliability needs.

What is included in the ENTERPRISE plan?

The ENTERPRISE plan offers tailored pricing and includes enterprise-grade features such as VPC deployment, custom backups, and 24/7 support. It is designed for large organizations with complex requirements.

Competition & Comparison

How does FalkorDB compare to Neo4j?

FalkorDB offers up to 496x faster latency, 6x better memory efficiency, flexible horizontal scaling, and includes multi-tenancy in all plans. Neo4j uses an on-disk storage model, is written in Java, and offers multi-tenancy only in premium plans. See detailed comparison.

How does FalkorDB compare to AWS Neptune?

FalkorDB is open source, supports multi-tenancy, and delivers better latency performance compared to AWS Neptune, which is proprietary, closed-source, and lacks multi-tenancy support. FalkorDB also supports Cypher query language and efficient vector search. See comparison.

How does FalkorDB compare to TigerGraph?

FalkorDB provides faster latency, more efficient memory usage, and flexible horizontal scaling compared to TigerGraph, which has limited horizontal scaling and moderate memory efficiency.

How does FalkorDB compare to ArangoDB?

FalkorDB demonstrates superior latency and memory efficiency, with flexible horizontal scaling, compared to ArangoDB's moderate memory efficiency and limited horizontal scaling.

What are the main differentiators of FalkorDB versus competitors?

FalkorDB's main differentiators include ultra-low latency, high memory efficiency, built-in multi-tenancy, open-source licensing, advanced AI integration, and enhanced user experience with interactive dashboards. These features provide a significant edge for performance-critical and AI-driven applications.

Customer Success & Testimonials

What feedback have customers given about FalkorDB's ease of use?

Customers like AdaptX and 2Arrows have praised FalkorDB for its ease of use and performance. AdaptX highlighted rapid access to clinical data insights, while 2Arrows' CTO called FalkorDB a 'game-changer' for its superior performance and intuitive design. See testimonials.

Can you share specific case studies of FalkorDB in action?

Yes, AdaptX uses FalkorDB for high-dimensional clinical data analysis, XR.Voyage overcame scalability challenges in immersive media, and Virtuous AI built a high-performance, multi-modal data store for ethical AI. Read case studies.

Who are some of FalkorDB's customers?

Notable customers include AdaptX, XR.Voyage, and Virtuous AI, each leveraging FalkorDB to solve complex data and AI challenges in their respective industries. See customer stories.

Implementation & Support

How long does it take to implement FalkorDB?

FalkorDB is built for rapid deployment, enabling teams to go from concept to enterprise-grade solutions in weeks, not months. This accelerates time-to-market for new applications.

How easy is it to get started with FalkorDB?

Getting started is straightforward: sign up for FalkorDB Cloud, try a free instance, run locally with Docker, schedule a demo, or access comprehensive documentation. Community support is available via Discord and GitHub. Start here.

What support and training options are available?

FalkorDB offers comprehensive documentation, community support via Discord and GitHub, access to solution architects, and free trial/demo options for onboarding. See documentation.

Where can I find tutorials and practical guides for FalkorDB?

Tutorials and guides are available on the FalkorDB blog, including practical articles like 'Getting Started with Graphiti and FalkorDB' and technical deep-dives.

Pain Points & Business Impact

What problems does FalkorDB solve for enterprises?

FalkorDB addresses trust and reliability in LLM-based applications, scalability and data management, alert fatigue in cybersecurity, performance limitations of competitors, interactive data analysis, regulatory compliance, and support for agentic AI and chatbots.

What business impact can customers expect from using FalkorDB?

Customers can expect improved scalability, enhanced trust and reliability, reduced alert fatigue, faster time-to-market, enhanced user experience, regulatory compliance, and support for advanced AI applications. These outcomes empower organizations to unlock the full potential of their data and achieve strategic goals. Learn more.

How does FalkorDB help reduce latency in AI agent workflows?

FalkorDB's graph-based retrieval (GraphRAG) reduces query latency by up to 70% compared to relational joins, ensuring AI agents remain responsive and compliant in enterprise environments. See research.

How does FalkorDB address context loss and stateless interaction in AI agents?

FalkorDB's graph structures maintain stateful context, eliminating re-prompting delays and improving the efficiency and accuracy of AI agent interactions.

How does FalkorDB support transparent and auditable AI reasoning?

FalkorDB enables transparent provenance by allowing every AI response to trace its context through graph relationships, which is critical for audits and regulatory compliance.