Graphiti now supports FalkorDB as a graph database backend for multi-agent environments. This integration addresses performance and isolation requirements in production AI agent deployments.

Graphiti builds temporally-aware knowledge graphs that represent evolving relationships between entities over time.

Preston Rasmussen, lead researcher at Zep AI, explains: “What makes Graphiti unique is its ability to autonomously build a knowledge graph while handling changing relationships and maintaining historical context”.

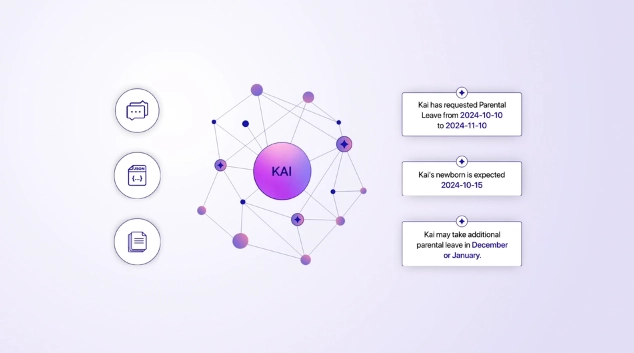

The framework processes both unstructured conversational data and structured business data through its episodic memory architecture. Each fact includes valid_at and invalid_at timestamps, enabling agents to track changes in user preferences and environmental conditions.

Integrating Diverse Data into Knowledge Graph Memory

You can feed Graphiti’s memory architecture data from a wide range of sources: chat transcripts, highly structured JSON, or raw unstructured text. You can work with multiple data formats and flows without constraint. You stitch these varied inputs into one cohesive knowledge graph or organize them into parallel graphs within the same environment.

This flexible approach lets conversational nuggets, system events, and business records inform your agent’s contextual lens. Instead of juggling siloed sources or relying on brittle retrieval-augmented generation (RAG) systems, your agents build a continuously updated understanding of their operational world.

Who is Graphiti for?

Developers building multi-agent systems benefit from improved query latency and tenant isolation without architectural changes to existing agent logic. The integration maintains Graphiti’s API surface and temporal data advantages while leveraging FalkorDB’s performance optimizations. Together, this combination ensures context stays relevant, both business-and-time-wise.

This integration is key to solving the following problems:

Why Conventional RAG Falls Short for Agents

Naive retrieval-augmented generation (RAG) approaches often stumble in fast-moving, agent-heavy environments. These systems generally assume their knowledge base remains relatively static: a luxury that fast-moving business scenarios just don’t afford. When underlying data shifts frequently, RAG pipelines risk returning outdated or incomplete information, as they aren’t built for rapid, live updates.

Take Microsoft’s approach, for example: they build knowledge graphs around entities and clusters, and large language models handle precomputed summaries. The good news: this delivers rich, insightful responses from big, mostly-unchanging datasets. The bad: two core issues:

Stale Context: Updating the knowledge graph often requires reprocessing large portions of the data, which creates considerable lag before agents see the latest information.

Slow Response Times: Multi-step reasoning, spanning several calls to language models, introduces latency that can stretch into tens of seconds, well beyond what real-time agents or users can tolerate.

For agentic applications where decisions hinge on timely and context-aware understanding, these limitations undercut scalability and effectiveness. You need live memory, not just static snapshots, to keep pace with changing conversations and shifting user needs.

Agent Knowledge Conflicts

Multiple AI agents accessing shared knowledge graphs without proper isolation put your project, and business, at risk, especially as you scale. FalkorDB’s tenant isolation provides dedicated graph instances per agent while sharing compute resources, eliminating update conflicts without infrastructure duplication costs.

Query Latency Bottlenecks

Knowledge-intensive agents require sub-10ms response times for multi-hop reasoning queries. Graph traversal operations across millions of nodes may exceed acceptable latency thresholds with other graph providers, greatly affecting user experience. FalkorDB’s sparse matrix representation reduces query execution time from seconds to milliseconds, enabling real-time agent interactions.

"The technical architecture supports our episodic memory model efficiently. FalkorDB's tenant isolation implementation enables concurrent agent operations while maintaining the sub-millisecond response times our production deployments require."

Gal Shubeli, FalkorDB Lead GraphRAG-SDK Developer

Here's how you can take advantage of this

Applications that require memory, context and a smooth user experience are first in line to reap the benefits of this partnership. Here are examples from the field:

Custom Domain Entities: Model Your Knowledge Graph for Your Application

Graphiti gives you flexibility to model data that fits your application. You define custom, domain-specific objects—customers, sales orders, procedural memory—right within the knowledge graph, instead of using generic entity types.

How does this work in practice? You define your custom entity structures using familiar data modeling tools, like Pydantic models in Python. Whether you capture user travel destinations, purchase history, or internal business workflows, these entities work as native components in the system.

Track user info (hobbies, key contacts, preferences)? Define a UserProfile entity with only the fields that matter.

Manage transactional or procedural knowledge like step-by-step instructions? Create a TaskSequence entity to store and evolve this memory.

Handle business-specific records, from inventory items to support tickets? Each becomes its own custom entity, complete with relevant relationships.

Graphiti automatically identifies, deduplicates, and labels these entities as they stream in from conversations or structured data sources. This improves agent recall (was it sushi or tacos last time? Who reported which bug?) and produces more contextual, precise, and useful responses from your agents at every interaction.

You get context extraction that fits your domain, less noise, and a continuously improving multi-agent environment: without wrestling with rigid schemas or sacrificing performance.

Customer Service Agent Fleets

Multiple agents handle concurrent customer interactions requiring access to product catalogs, support histories, and policy databases. Each agent maintains customer-specific context while accessing shared product knowledge. FalkorDB prevents agents from accessing other customers’ data, thus maintaining privacy while enabling shared product relationship queries, even as your business scales.

Enterprise Knowledge Management

Organizations deploy specialized agents for different departments (HR, legal, engineering) accessing overlapping but restricted knowledge domains. Agents require department-specific views of company knowledge without cross-contamination. FalkorDB’s multi-tenancy ensures compliance boundaries while enabling authorized cross-department knowledge sharing.

Why Hybrid Indexing Matters for Agent Memory

Hybrid indexing combines semantic embeddings, keyword search (BM25), and graph traversal to deliver concrete benefits for agent memory systems. By mixing these complementary approaches, you can pinpoint relevant facts and context with speed and accuracy. Here is what that means in practice:

Consistent, Low-Latency Retrieval

Rather than waiting for large language models to process and summarize data at query time, hybrid indexing enables sub-second responses. You need this for voice assistants, chatbots, and other interactive AI where response time matters.

Scalability Without Slowdown

Whether you have thousands or millions of knowledge nodes, the hybrid approach delivers near-constant query times at any scale. Semantic search finds meaning, keyword search captures specificity, and graph traversal preserves relationships, all without slowing as your dataset grows.

Contextual Precision

Your agents can quickly fetch not just raw facts, but the right facts in the right context: whether based on conversational nuance, business rules, or past user preferences. This combination supports memory-driven applications in customer service, enterprise automation, and beyond.

In short, adopting a hybrid indexing system means your agents receive up-to-date, context-rich knowledge fast enough to power real-time, scalable AI solutions.

Next Steps

This integration demonstrates that multi-agent knowledge graphs require purpose-built database architecture. Generic graph databases create bottlenecks that compound as agent populations scale. The Graphiti partnership proves that proper database design directly impacts agent system viability at enterprise scale.

- Graphiti documentation: https://github.com/getzep/graphiti/blob/main/examples/quickstart/quickstart_falkordb.py

- Project examples: https://github.com/getzep/graphiti/tree/main/examples/podcast

The simplest way to run FalkorDB is via Docker:

docker run -p 6379:6379 -p 3000:3000 -it --rm falkordb/falkordb:latest

How does Graphiti's FalkorDB integration improve multi-agent performance?

What tenant isolation benefits does Graphiti + FalkorDB provide?

Can I migrate existing Graphiti agents to the FalkorDB backend?

References and citations

[1] FalkorDB Multi-Tenancy Documentation: https://www.falkordb.com/blog/falkordb-cloud-cluster-support-on-gcp/

[2] FalkorDB vs Neo4j Performance Benchmarks: https://www.falkordb.com/blog/graph-database-performance-benchmarks-falkordb-vs-neo4j/

[3] Graphiti GitHub Repository: https://github.com/getzep/graphiti

[4] FalkorDB Multi-Tenant Architecture: https://www.falkordb.com/

[5] Zep: A Temporal Knowledge Graph Architecture for Agent Memory: https://arxiv.org/abs/2501.13956

[6] Graphiti Quickstart Examples: https://help.getzep.com/graphiti/getting-started/quick-start