GraphRAG-Bench Novel — Leaderboard

20 novels, 2,010 questions, Answer Correctness (ACC) per question type.

| # | System | Fact Retrieval |

Complex Reasoning |

Contextual Summarize |

Creative Generation |

Overall |

|---|---|---|---|---|---|---|

| 1 | FalkorDB GraphRAG-SDK | 65.22 | 58.63 | 69.54 | 57.08 | 63.73 |

| 2 | AutoPrunedRetriever | 45.99 | 62.80 | 83.10 | 62.97 | 63.72 |

| 3 | G-Reasoner | 60.07 | 53.92 | 71.28 | 50.48 | 58.94 |

| 4 | HippoRAG2 | 60.14 | 53.38 | 64.10 | 48.28 | 56.48 |

| 5 | Fast-GraphRAG | 56.95 | 48.55 | 56.41 | 46.18 | 52.02 |

| 6 | MS-GraphRAG (local) | 49.29 | 50.93 | 64.40 | 39.10 | 50.93 |

| 7 | RAG (w/ rerank) | 60.92 | 42.93 | 51.30 | 38.26 | 48.35 |

| 8 | LightRAG | 58.62 | 49.07 | 48.85 | 23.80 | 45.09 |

| 9 | HippoRAG | 52.93 | 38.52 | 48.70 | 38.85 | 44.75 |

Key Takeaways

-

1

A well-architected GraphRAG retrieval layer consistently outperforms a weaker pipeline running a more expensive model, regardless of LLM choice.

-

2

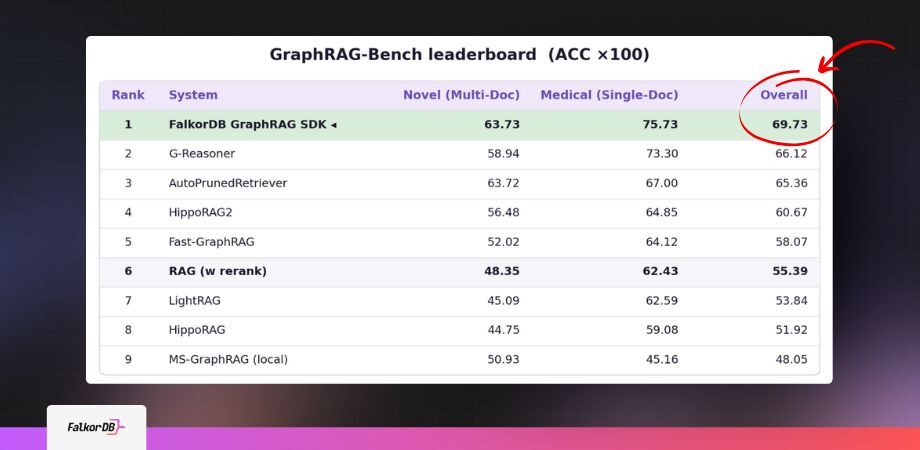

FalkorDB GraphRAG SDK 1.0 scores 63.73 overall on GraphRAG-Bench Novel and 75.73 on the Medical corpus — ranking #1 on both public leaderboards.

-

3

A 1,000-document ingestion costs roughly $5–6 in LLM tokens using GPT-4o-mini, with each additional query adding approximately $0.001.

This how-to guide targets software architects and senior developers integrating GraphRAG into production AI pipelines. It examines why standard RAG implementations fail at scale, what FalkorDB GraphRAG SDK 1.0 does differently, and what the GraphRAG-Bench benchmark results mean for your architecture decisions.

Why Standard RAG Fails in Production

Retrieval-Augmented Generation (RAG) is the architecture pattern that grounds LLM responses in external documents by retrieving relevant text chunks at query time. Standard vector-based RAG retrieves semantically similar text fragments using embedding distance, then passes them to an LLM for answer synthesis.

The problem surfaces at scale. Vector retrieval returns text chunks, not structured knowledge. Multi-hop questions, cross-document synthesis, and complex reasoning tasks all degrade badly as document volumes grow. The system that looked accurate at 1 document begins to fail at 100.

Install GraphRAG SDK 1.0

$ pip install graphrag-sdkThe stable 0.x branch remains unaffected. Teams evaluating 1.0 can do so in parallel.

The failure modes are well-documented across production teams:

Token costs escalate non-linearly: production queries run 3-5x longer than test queries, and a single comparative question can trigger dozens of LLM calls through retrieval multiplication.

Knowledge extraction pipelines hallucinate entities and relationships, creating brittle graph structures that require expensive manual correction.

Poor default configurations require weeks of tuning before any system produces reliable answers in a new domain.

Graph construction overhead consumes compute budget before you have answered a single question, skewing cost models built on demo-scale data.

According to a 2026 analysis, 72% to 80% of enterprise RAG implementations are failing to move into production. The issue is not that retrieval does not work. It is that production AI systems require deterministic reasoning, traceability, and live context, none of which flat vector search provides reliably.

What GraphRAG Actually Solves

Graph Retrieval-Augmented Generation (GraphRAG) replaces the flat chunk-retrieval model with a knowledge graph layer. During ingestion, an NLP pipeline extracts entities and their relationships from documents and stores them as nodes and edges in a graph database. At query time, the system traverses those relationships rather than scanning flat embedding vectors.

This structural difference resolves the core failure modes. Graph traversal supports multi-hop reasoning natively: a question connecting Person A to Organization B to Event C follows edges rather than relying on embedding proximity. Cross-document synthesis works because entity resolution links the same concept across multiple source files.

Microsoft’s GraphRAG research (Edge et al., 2024) demonstrated that graph-based retrieval achieved 86% comprehensiveness on complex multi-entity queries, compared to 57% for traditional vector RAG on the same evaluation set. The gap is not marginal. It represents a category difference in answer quality for any use case involving connected knowledge.

"The issue isn't that retrieval doesn't work. It's that production AI systems require deterministic reasoning, traceability, and live context."

Todd Blaschka, CSO Online, February 2026

Standard RAG vs GraphRAG

Production failure modes and how a graph layer resolves each.

FalkorDB GraphRAG SDK 1.0: What Changed

FalkorDB GraphRAG SDK 1.0 is the result of a year of client deployments, informed by real-world failure patterns and academic research. The central insight driving the redesign: the harness matters more than the model. A well-architected retrieval and reasoning layer consistently outperforms a weak pipeline running a more expensive model.

Version 1.0 ships with the following changes from previous releases:

Modular API with an async-first architecture and strategy pattern throughout, enabling cleaner integration into existing service topologies.

Improved knowledge extraction that captures more entity relationships with higher fidelity, reducing hallucinated edges during ingestion.

Category Breakdown — GraphRAG-Bench Medical

Answer Correctness (ACC) per question type. Closest competitor: G-Reasoner.

Reduced query-time compute and lower LLM token consumption, decoupling retrieval steps from expensive model calls wherever possible.

Sensible defaults built on a default ontology of 11 common business concepts (People, Organizations, Products, Events, and more), which produce a working system in days, not weeks.

LLM-agnostic integration supporting OpenAI, Anthropic, Google, Cohere, local open-source models, and 100+ others through a unified interface.

Multi-path retrieval combining graph traversal and semantic search, with ranked result merging across retrieval strategies.

An onboarding UI that reduces the setup time required to validate a new knowledge graph configuration.

The SDK is a full rewrite from v0.x. It uses a different API, different schema, and different storage layout. Teams upgrading from 0.x should treat this as a migration, not an upgrade. The v0 branch remains available at legacy-v0 for teams that need continuity.

Benchmark Results: GraphRAG-Bench Novel and Medical

FalkorDB benchmarked GraphRAG SDK against eight other GraphRAG and RAG systems on the GraphRAG-Bench Novel dataset. The evaluation covers 2,010 questions across four task types: Fact Retrieval, Complex Reasoning, Contextual Summarization, and Creative Generation. All tests ran on a MacBook Air (Apple M3, 24 GB) using GPT-4o-mini via Azure OpenAI for both answer generation and scoring.

Overall Scores

- FalkorDB GraphRAG SDK: 63.73

- Closest competitor: 63.72

Category Breakdown

- Complex Reasoning: 58.63 (FalkorDB SDK)

- Contextual Summarization: 69.54 (FalkorDB SDK)

- Fact Retrieval: ranked first overall

- Creative Generation: 57.08

The SDK also ranked first on the GraphRAG-Bench Medical leaderboard, demonstrating that the architecture generalizes across domain types, not just narrative fiction.

Complex Reasoning and Contextual Summarization are the categories where retrieval-only approaches break down most visibly. These are precisely the tasks that predict real-world answer quality in enterprise knowledge bases, legal document review, medical literature synthesis, and multi-product support systems. The fact that the SDK leads on these two categories carries more signal than an aggregate score alone.

Token Cost & Throughput — 1,000 Documents

Extrapolated from a 20-document GraphRAG-Bench run using gpt-4o-mini.

Cost (gpt-4o-mini)

- Per document

- ~17,600 tokens

- Per document cost

- ~$0.0055

- Ingest API calls

- 71

- Finalize API calls

- 2

- 1,000-doc ingest

- ~17.6M tokens / ~$5.50

- Per retrieval query

- ~$0.001

Ingestion Throughput

- Document size

- 1,000–1,350 words

- Per document

- 21–33 s

- 100 documents

- ~53 min

- 1,000 documents

- ~10 h

- Bottleneck

- Ingestion throughput, not graph capacity. Parallelize across workers at scale.

Token Cost Modeling for Production Scale

Token cost is where GraphRAG projects regularly stall after the POC phase. Graph construction is LLM-intensive by design: every document passes through extraction, entity resolution, and finalization steps before producing a queryable graph. The cost model differs structurally from vector indexing.

Based on the GraphRAG-Bench evaluation data (20 documents, 52,810 ingestion tokens at GPT-4o-mini pricing), the per-document token cost is approximately 17,600 tokens, or $0.0055 per document. At 1,000 documents, ingestion costs roughly $5-6 in LLM tokens. Each retrieval query adds approximately $0.001 on top.

The SDK works with any major LLM provider: OpenAI, Anthropic, Google, Cohere, and 100+ others, including local open-source models. The best benchmark results were achieved using GPT-4.1, but the architecture does not require any specific model and does not lock teams into a single provider.

Token Budget Breakdown (GPT-4o-mini, per 1,000 docs)

Phase: Ingest | API Calls: 71 | Ingestion tokens: ~17.6M | Cost: ~$5.50

Phase: Finalize | API Calls: 2 | Finalization overhead: included above

Phase: Retrieval | Per query: ~$0.001

The ingestion time is the real constraint at scale, not storage or graph traversal. Based on benchmark timing data, ingestion runs at approximately 21-33 seconds per document for documents in the 1,000-1,350 word range. At that rate, 100 documents takes approximately 53 minutes, and 1,000 documents takes approximately 10 hours.

This means teams planning large-scale ingestion should pipeline document processing across parallel workers, not wait for sequential completion. The bottleneck is ingestion throughput, not FalkorDB instance capacity.

Ontology Design: Defaults, Extensions, and Domain Specificity

The ontology is the schema for your knowledge graph. It defines which entity types the extraction pipeline captures (People, Organizations, Products, Events, and so on) and what relationship types connect them. A poorly designed ontology produces a sparse, noisy graph. A well-designed ontology enables precise traversal on the queries that matter most.

The SDK ships with a default ontology of 11 common business concepts. This covers most general-purpose deployments and produces a working graph without any schema configuration. Teams can extend it with domain-specific entity types and relationship definitions for specialized use cases such as clinical records, financial instruments, or technical documentation.

Default Ontology — 11 Business Concepts

Ships with the SDK. Extend per domain by subclassing the schema.

Adding domain concepts after ingestion requires re-running extraction on affected documents.

The practical recommendation is to start with the default ontology for the first iteration, evaluate which query types miss expected entities, and then extend the schema incrementally. Adding domain concepts after initial ingestion requires re-running extraction against affected documents, so planning the ontology scope before ingestion at scale saves compute.

Dynamic Knowledge Graphs: Handling Changing Data

Production knowledge graphs are not static artifacts. Documents update, facts change, and new source material arrives continuously. A graph that requires full reconstruction on every update does not scale operationally.

The SDK handles document updates through graph merging rather than full rebuild. When new or updated documents arrive, the system merges them into the existing knowledge graph, preserving the current graph state and adding new entities and relationships incrementally. This means the graph evolves with your data rather than requiring a cold-start rebuild on each ingestion cycle.

One caveat to note: automatic removal of outdated or superseded facts is currently a roadmap item, not a shipped feature. Teams working with rapidly changing factual data should build a manual invalidation step into their update workflow until that capability ships.

Multi-Tenancy Architecture

Enterprise and SaaS deployments require data isolation between tenants. The SDK supports isolated knowledge graphs per customer, team, or user inside a shared environment. Each tenant’s graph is separated at the data layer, not just at the query layer.

This architecture maps directly to multi-tenant SaaS patterns where a single infrastructure deployment serves multiple customers with non-overlapping data access. A legal platform, for example, can run separate knowledge graphs per client matter without any risk of cross-client entity leakage during graph traversal.

Per-Tenant Knowledge Graphs

Isolation at the data layer. One runtime, N separate graphs.

No cross-tenant entity leakage during graph traversal.

What This Means for Software Architects Evaluating GraphRAG

Software architects evaluating GraphRAG infrastructure need a production-grade harness: high accuracy out of the box, predictable LLM token costs, modular integration points, and support for incremental updates without requiring full rebuilds. Research prototypes do not meet that bar.

The benchmark results answer the core question of retrieval accuracy. The token cost data answers the infrastructure cost question. The multi-tenancy and dynamic update capabilities answer the operational architecture question. The LLM-agnostic interface answers the vendor dependency question.

The remaining question for most teams is integration complexity. Version 1.0 ships with a new modular API, sensible defaults, and an onboarding UI specifically to reduce that friction. You can install the release candidate with:

pip install graphrag-sdk

The stable release branch at 0.x remains unaffected. Teams evaluating 1.0 can do so in parallel without disrupting existing deployments.

Install GraphRAG SDK 1.0

$ pip install graphrag-sdkThe stable 0.x branch remains unaffected. Teams evaluating 1.0 can do so in parallel.

FAQ

What is GraphRAG and how does it differ from standard RAG?

GraphRAG augments LLM retrieval by building a structured knowledge graph from documents, enabling multi-hop reasoning and entity-relationship traversal that vector-only RAG cannot perform reliably at scale.

How much does it cost to run a GraphRAG ingestion pipeline at scale?

Using GPT-4o-mini, ingesting 1,000 documents costs approximately $5-6 in LLM tokens. Each retrieval query adds roughly $0.001 on top of ingestion costs.

Does FalkorDB GraphRAG SDK support multi-tenancy?

Yes. Each customer, team, or user gets an isolated knowledge graph inside a shared environment, designed for SaaS and enterprise deployments where data separation is required.

References and citations

- [1] FalkorDB. “GraphRAG SDK Benchmark: Why RAG Breaks in the Real World.” FalkorDB Internal Benchmark Documentation, 2025. https://github.com/FalkorDB/GraphRAG-SDK

- [2] Blaschka, Todd. “GraphWise Aims to Boost AI Accuracy with Knowledge Graphs.” LinkedIn / CSO Online, February 17, 2026. https://www.linkedin.com/posts/toddblaschka_graphwise-aims-to-boost-ai-accuracy-with-activity-7429651935072288768-Etsl

- [3] Edge, Darren et al. “From Local to Global: A Graph RAG Approach to Query-Focused Summarization.” Microsoft Research, April 2024. https://www.microsoft.com/en-us/research/blog/graphrag-unlocking-llm-discovery-on-narrative-private-data/

- [4] FalkorDB. “FalkorDB GraphRAG SDK: Benchmark Results.” GraphRAG-SDK GitHub Repository, 2025. https://github.com/FalkorDB/GraphRAG-SDK/releases/tag/v1.0.0rc1

- [5] FalkorDB. “GraphRAG-SDK 2.0 Narrative and Q&A.” Internal product documentation and benchmark Q&A, 2025.

- [6] Towards AI. “Why 90% of Agentic RAG Projects Fail (And How to Build One That Actually Works).” Towards AI, January 2026. https://towardsai.net/p/machine-learning/why-90-of-agentic-rag-projects-fail-and-how-to-build-one-that-actually-works-in-product