Highlights

- Graph memory stores entity relationships like (Alice)-[:ALLERGIC_TO]->(TreeNuts), letting agents traverse verified facts instead of guessing via nearest-neighbor vector search.

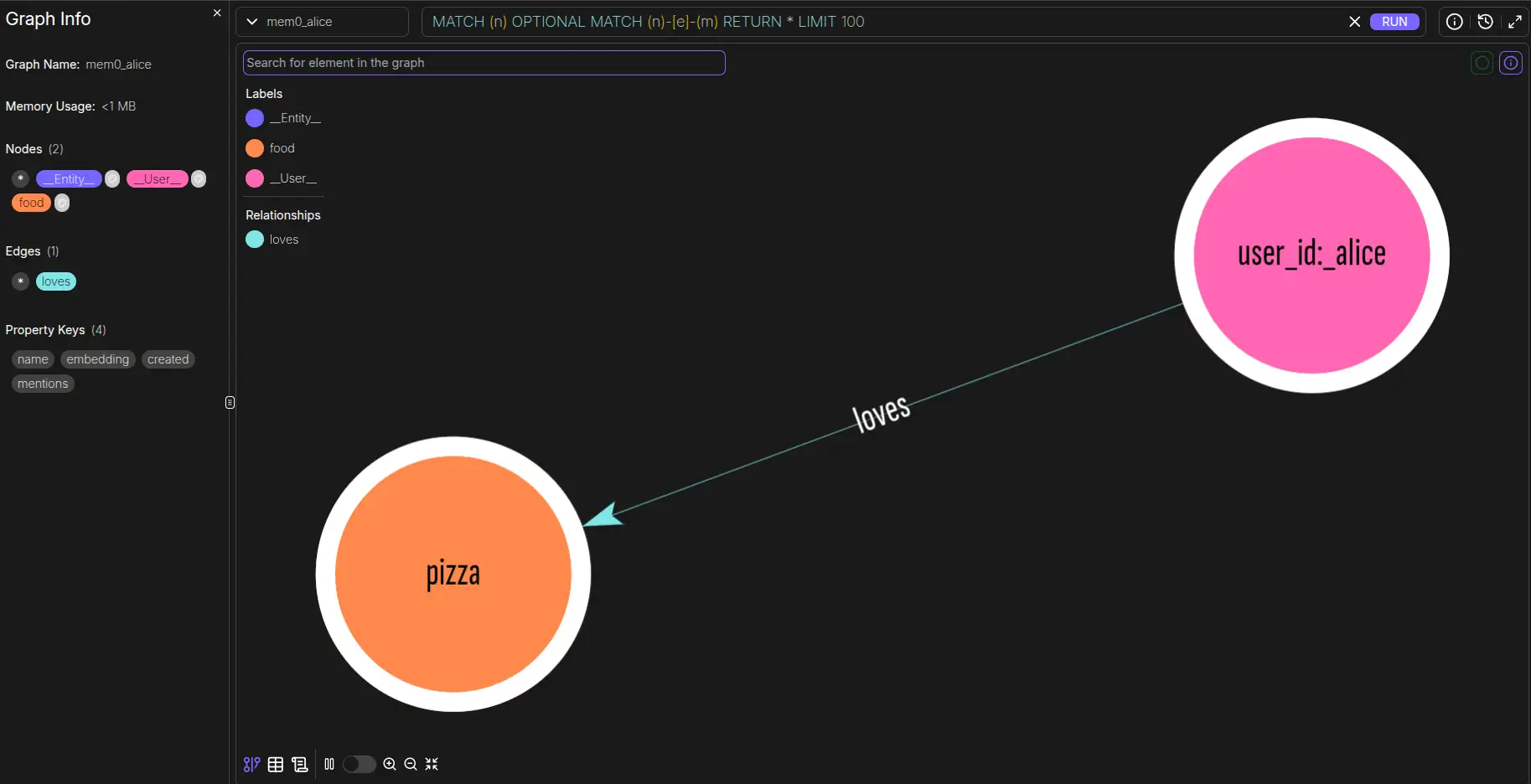

- mem0-falkordb auto-isolates each user into a dedicated graph (mem0_alice, mem0_bob), eliminating data leakage and keeping query time constant as you scale from 10 to 10,000 users.

- Runtime patching means zero forks — import the plugin, register it, and FalkorDB becomes Mem0's graph backend with p99 latency under 140ms versus Neo4j's 46,900ms.

The current state of AI agent memory is, frankly, a bit like 1950s file cabinets. Most agentic frameworks rely on vector stores to provide long-term memory. While vector search is great for “find things that sound like this,” it’s inherently flat.

If an agent learns that “Alice is vegan” and “Alice is allergic to nuts,” a vector store treats these as two distinct points in a high-dimensional space. To the LLM, these are two separate fragments of information retrieved via nearest-neighbor search. But in reality, these aren’t just isolated strings; they are related attributes of a single entity (in this case, Alice).

When the agent’s memory is represented as a Graph, it doesn’t just store “Alice” and “Vegan” as embeddings. It stores a relationship: (Alice)-[:FOLLOWS_DIET]->(Vegan). This structural connectivity allows agents to reason across facts rather than just retrieving them.

To address this challenge, we’re released the FalkorDB graph store plugin for Mem0. It adds persistent, high-performance graph memory to your AI agents with a single line of code.

Why Graph Memory?

Traversal vs. Search

To understand why your agent needs a graph, consider a common scenario from one of FalkorDB’s demos:

Alice is a vegan software engineer, allergic to tree nuts, and currently leading a GraphQL migration at her company. She is also planning a trip to Japan.

In a conventional vector-only memory setup, a query like “What should Alice eat in Japan?” triggers a similarity search. The results might return her trip to Japan and perhaps her nut allergy, but might miss her veganism if the “vector distance” between “Japan” and “Vegan” is too high in that specific context.

In a Knowledge Graph, the agent navigates the relationships. It starts at the node Alice, traverses to her DietaryPreferences, sees Vegan, traverses to Allergies, sees TreeNuts, and checks her Destination, Japan. The agent isn’t just “guessing” based on semantic similarity; it is traversing a verified web of facts. The result is a response that is logically sound.

The mem0-falkordb plugin

mem0-falkordb is a drop-in plugin that registers FalkorDB as the graph backend for Mem0. It uses Python runtime patching, meaning you don’t need to fork Mem0 or modify its source code. You simply import the plugin, register it, and your agents are backed by an ultra-fast graph database.

Setup

Setting it up is designed to be developer-friendly. Here is how you initialize a Mem0 instance with FalkorDB as the persistent graph store:

from mem0_falkordb import register

from mem0 import Memory

# 1. Register FalkorDB as a Mem0 provider

register()

# 2. Define your configuration

config = {

"graph_store": {

"provider": "falkordb",

"config": {

"host": "localhost",

"port": 6379,

"database": "mem0"

},

},

"llm": {

"provider": "openai",

"config": {"model": "gpt-5-mini"}

},

}

# 3. Initialize Memory

m = Memory.from_config(config)

# 4. Add data and search

m.add("I'm a vegan software engineer allergic to nuts", user_id="alice")

results = m.search("what can alice eat?", user_id="alice")

With this configuration, every piece of information added to m.add() is parsed by the LLM, converted into entities and relations, and persisted into FalkorDB.

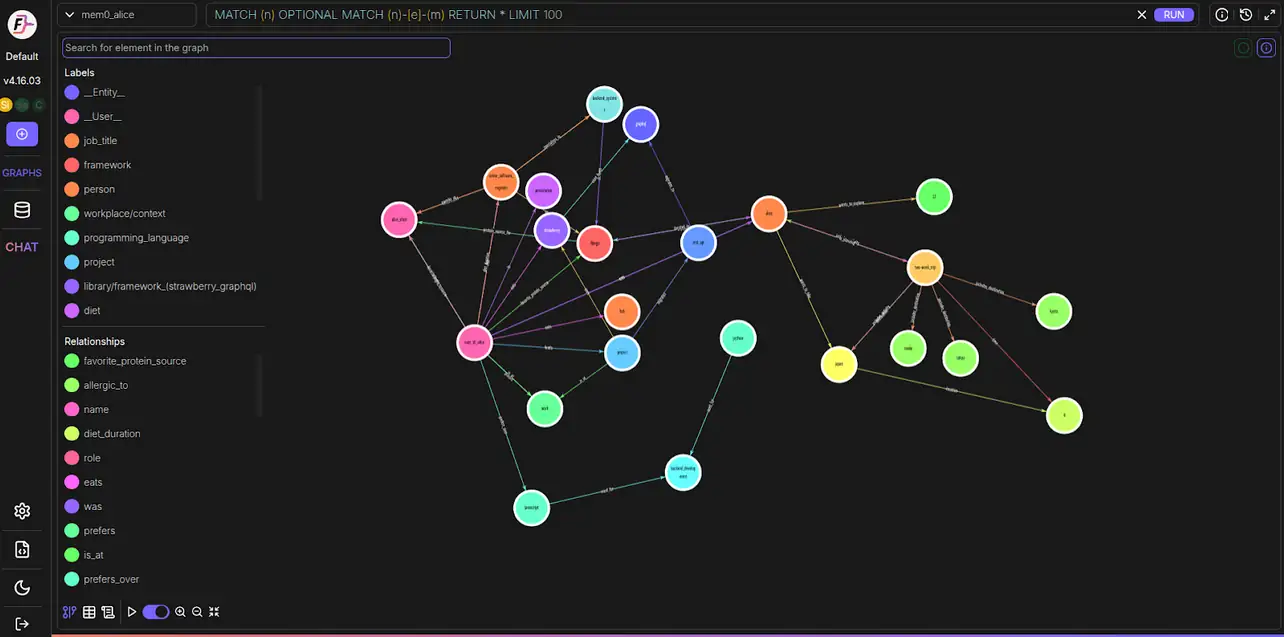

The Standout Feature: Per-User Graph Isolation

mem0-falkordb takes a different, more robust architectural approach: Automatic Graph Isolation.

When you provide a user_id to Mem0, the FalkorDB plugin automatically maps that user to their own dedicated graph: mem0_alice, mem0_bob, mem0_carol.

Architecture Advantages

Why This Is Superior

Per-user graph isolation delivers unmatched data safety, performance, and operational simplicity.

Zero Leakage

There is no physical way for a query for Bob to touch Alice's data. The graph engine is literally operating on a different data structure.

Performance at Scale

As you move from 10 users to 10,000, your query time remains constant. The graph engine only ever traverses the specific subgraph for that user, rather than filtering through a global index.

Trivial Cleanup

If a user requests their data be deleted (GDPR/CCPA), you don't need to run complex MATCH or DELETE queries with filters. You simply run DELETE GRAPH mem0_alice.

Memory Efficiency

Individual graphs allow FalkorDB to optimize memory allocation per user, leading to better cache hits and lower latency.

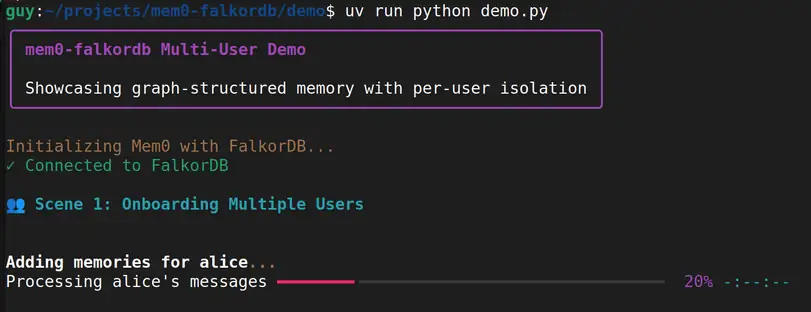

Demo

The demo/demo.py script provides a full lifecycle look at how this works in practice across five distinct “scenes.”

Scene 1 & 2: Onboarding and Retrieval

We initialize three vastly different users:

- Alice Chen: A backend engineer hiking the NH 48.

- Bob: An Italian chef and “soccer dad” in Boston.

- Dr. Carol Martinez: A cardiologist and marathoner researching ML at MIT.

When we query, “What is her research about?” specifically for user_id=”carol”, the system ignores the hundreds of facts about Alice or Bob. It returns Carol’s specific focus on ML and AFib.

Scene 3: Facts Over Time

Memory isn’t static. People change. In Scene 3, Alice informs the agent she is transitioning from vegan to pescatarian.

Mem0 and FalkorDB handle the resolution of contradictory facts. The graph updates, the IS_VEGAN relationship is superseded or modified, and subsequent queries reflect her new diet. This is significantly harder to achieve in a pure vector store where “old” embeddings often linger and pollute search results.

Scene 4: Proving Isolation

We run a search for “marathons” against Alice’s graph. It returns nothing. We run the same search against Carol’s graph, and it returns her history in the Boston and NYC marathons. This proves that even with similar semantic concepts, the per-user isolation acts as a hard boundary.

Scene 5: Scaling Without Friction

The demo programmatically creates 10 synthetic users. Because of the isolation architecture, querying any one of them takes the same amount of time as it did when there was only one user. The total user count does not degrade the individual user’s experience.

Inspecting the Raw Graph

The “wow moment” for most developers comes when running inspect_graphs.py. This utility bypasses the Mem0 abstraction and connects directly to FalkorDB via falkordb-py to show you exactly what the LLM has synthesized.

When you run it after the demo, you’ll see an output similar to this:

Graph: mem0_alice

├── Alice --[IS_A]--> SoftwareEngineer

├── Alice --[FOLLOWS_DIET]--> Vegan

├── Alice --[PLANS_TO_VISIT]--> Japan

├── Alice --[PREFERS]--> Python

└── Alice --[ALLERGIC_TO]--> TreeNuts

Graph: mem0_carol

├── Carol --[OCCUPATION]--> Cardiologist

├── Carol --[RESEARCHES]--> AFib

├── Carol --[AFFILIATED_WITH]--> MIT

└── Carol --[COMPLETED]--> BostonMarathon

This structure is built automatically from natural language. You didn’t have to define a schema, write Cypher CREATE statements, or manage nodes manually. The agent heard a fact and organized it into a logical hierarchy.

How it Works Under the Hood

Developer Notes

Mem0 was designed around other graph stores. Our runtime patching layer intercepts Mem0 internal graph calls and translates them into FalkorDB-optimized Cypher — no fork, no upstream wait.

from mem0_falkordb import register

# Runtime patch registration (no mem0 fork required)

register()

from mem0 import Memory

memory = Memory.from_config({

"graph_store": {

"provider": "falkordb",

"config": {"host": "localhost", "port": 6379, "database": "mem0"}

}

})db.index.vector.queryNodes(...)db.idx.vector.queryNodes(...)elementId(n)id(n)SET n.embedding = $embeddingSET n.embedding = vecf32($embedding)CALL { ... UNION ... }outgoing query + incoming queryQuickstart

Run the Mem0 + FalkorDB Demo in 3 Steps

Spin up FalkorDB

docker run --rm -p 6379:6379 falkordb/falkordb:latestSet your environment

export OPENAI_API_KEY='your-key-here'Run the Demo

We recommend using uv for lightning-fast dependency management:

git clone https://github.com/FalkorDB/mem0-falkordb.git

cd mem0-falkordb/demo

uv sync

uv run python demo.py

uv run python inspect_graphs.pyMemory shouldn’t be a flat list of text chunks. For agents to truly act as assistants, they need to understand the entities they interact with and the complex web of relationships that define them.

By combining Mem0’s sophisticated memory management with FalkorDB’s speed and per-user isolation, you can build agents that are faster, safer, and significantly smarter.

Give it a spin, star the repo, and let us know what you build!

- GitHub Repo: mem0-falkordb

- Managed Service: FalkorDB Cloud

FAQ

How does graph memory differ from vector memory for LLM agents?

Vector search retrieves semantically similar text chunks. Graph memory stores typed relationships between entities, enabling multi-hop reasoning across connected facts, not just similarity scores.

Does mem0-falkordb require forking Mem0 or modifying its source?

No. It uses Python runtime patching to translate Mem0’s internal calls into FalkorDB-optimized Cypher. No forks, no upstream PRs needed to stay compatible as Mem0 evolves.

How does per-user graph isolation handle GDPR deletion requests?

Each user maps to a dedicated graph (e.g., mem0_alice). To purge all data for that user, run `DELETE` GRAPH mem0_alice. No complex filtered DELETE queries across a shared dataset.

References and citations

- Mem0 Graph Memory Documentation — Mem0’s official reference on entity extraction, relationship storage, and hybrid vector+graph retrieval: https://docs.mem0.ai/open-source/features/graph-memory[docs.mem0]

- FalkorDB vs. Neo4j Performance Benchmarks — Sub-140ms p99 latency vs. Neo4j’s 46,923ms under equivalent workloads on aggregate expansion queries: https://www.falkordb.com/blog/graph-database-performance-benchmarks-falkordb-vs-neo4j/[falkordb]

- Mem0 Research Paper (arXiv) — Empirical results showing Mem0 with graph memory achieves ~2% higher overall score vs. base vector-only configuration across multi-hop and temporal question categories: https://arxiv.org/abs/2504.19413[arxiv]

- mem0-falkordb GitHub Repository — Plugin source code, demo scripts, and setup instructions: https://github.com/FalkorDB/mem0-falkordb

- Graphiti + FalkorDB Integration (Zep Blog) — Independent benchmark corroboration citing 496x faster p99 latency and 6x better memory efficiency for FalkorDB: https://blog.getzep.com/graphiti-knowledge-graphs-falkordb-support/[blog.getzep]

- Mem0 Graph Memory for AI Agents (Mem0 Blog) — Breakdown of graph vs. vector memory tradeoffs, with data on 91% faster responses and 90% lower token costs in hybrid retrieval mode: https://mem0.ai/blog/graph-memory-solutions-ai-agents[mem0]