Key Takeaways

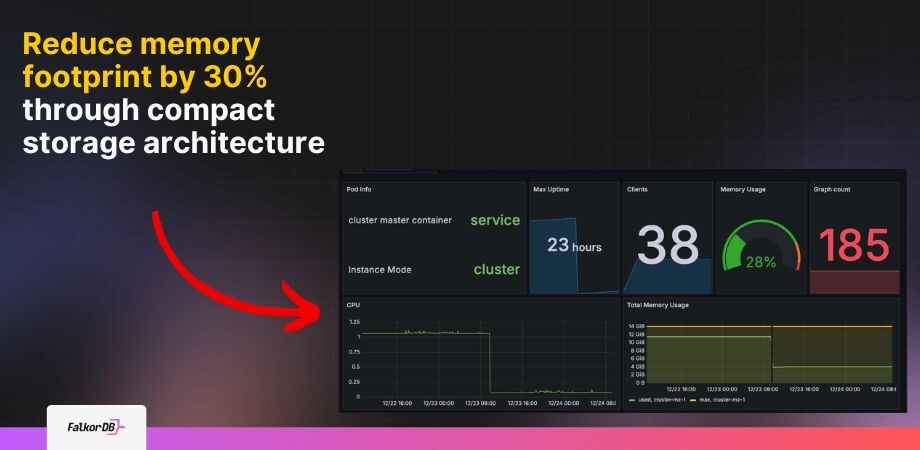

- Dual-representation storage cuts memory consumption up to 30% by maintaining compact format until runtime access, reducing infrastructure costs for graph deployments.

- Batch processing improves CREATE, SET, DELETE operation speeds by grouping records into execution units, minimizing per-record overhead and context switching.

- Auto-shrink mechanism automatically compacts deleted index arrays during heavy deletion cycles, preventing memory bloat without manual intervention or configuration.

FalkorDB v4.14.10 introduces a dual-representation storage architecture that reduces memory consumption by up to 30% while accelerating write operations through batch processing. This release addresses infrastructure costs in deployments by implementing compact in-memory storage that dynamically converts to runtime representations only when accessed or modified.

Performance Profile Comparison

Profiling data from v4.14.10 demonstrates the performance characteristics of batch processing versus MASTER execution models in FalkorDB's All Node Scan operation producing 50,000 records.

| Operation | Master | Batch Processing |

|---|---|---|

| Scanning | 3.519056 ms | 1.658920 ms 53% faster |

| Projection | 8.798891 ms | 5.035365 ms 43% faster |

| Results Aggregation | 5.120015 ms | 0.107023 ms 98% faster |

| Batch Count | N/A | 782 batches |

The batch processing implementation shows different execution characteristics, with record counts reflecting batch units rather than individual records, indicating a fundamental shift in execution granularity.

Performance Analysis

This architectural change optimizes resource utilization by reducing per-record overhead and improving cache efficiency. The reduced execution time for results aggregation (0.107023 ms vs 5.120015 ms) demonstrates the efficiency gains from processing data in batches rather than individual units.

Dual-Representation Storage Model

The v4.14.10 release implements a storage architecture that maintains metadata for nodes and edges in two distinct formats: compact storage mode and runtime representation mode. Compact storage mode optimizes memory footprint by storing data in a compact format, while runtime mode provides the expanded representation required for active query processing and data manipulation.

When executing queries, the system dynamically converts compact data structures into runtime representations only as needed. This evaluation approach reduces memory overhead for inactive graph elements while maintaining query performance for accessed data. The architecture enables FalkorDB to handle larger graph datasets within existing memory constraints, directly addressing infrastructure cost optimization.

Batch Processing Performance Gains

CREATE, SET, and DELETE operation speeds by reducing the number of execution cycles required for bulk operations. The batching mechanism addresses write-heavy workloads by consolidating multiple operations into efficient execution units.Auto-Shrink for Deleted Index Arrays

FalkorDB v4.14.10 implements automatic compaction for deleted index arrays to prevent excessive memory footprint during heavy deletion cycles. The auto-shrink mechanism monitors the deleted index array and triggers compaction when the number of empty slots exceeds optimal thresholds.

This feature addresses scenarios where large-scale deletion operations create fragmented memory pools with numerous empty slots. Without automatic compaction, these empty slots would continue consuming memory resources despite containing no active data.