The ability to scale out a database is crucial, in this short post I would like to walk through how FalkorDB scales out.

As a quick recap I should mention that FalkorDB is a native graph database, developed as a Redis module, Falkor can manage thousands of individual graphs on a single instance.

Baseline

We start out with a single FalkorDB instance, let’s call it primary, this instance handles both READ and WRITE operations.

# create primary database

docker run --name primary --rm -p 6379:6379 falkordb/falkordb

Once initial replication between the two servers is done we can divert all of our read queries to secondary and only hand off write queries to primary.

# create replica

docker run --name secondary --rm -p 6380:6380 -e REDIS_ARGS="--port 6380 --replicaof 172.17.0.2 6379" falkordb/falkordb:edge

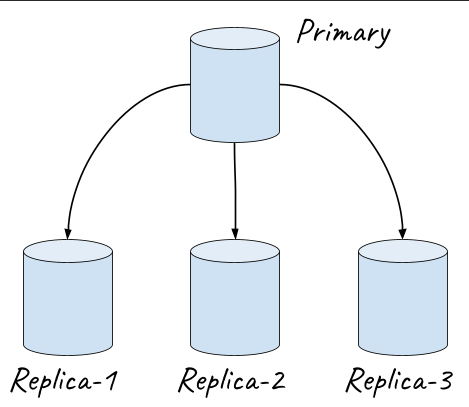

Multiple replicas

It is worth mentioning that we’re not limited to just a single READ replica, but we can create as many READ replicas as we need, e.g. a single primary and three read replicas: replica-1, replica-2 and replica-3.

A load balancer can distribute the read load among these three replicas.

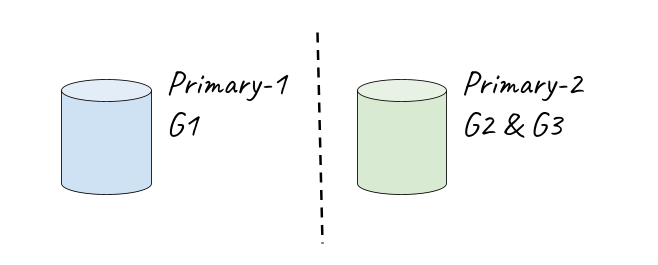

Distribute graphs

In the former example we’ve distributed the entire dataset from the primary database to multiple replicas, in cases where multiple graphs are managed on a single server e.g. primary-1 holds graphs: G-1, G-2 and G-3.

We can distribute the graphs among multiple servers, for example primary-1 would manage G-1 and a new server primary-2 would host G-2 and G-3.

Write operations will be routed to the appropriate server depending on the accessed graph key.

Of course each one of these primary servers can have multiple read replicas. e.g. primary-1 can have two read replicas and primary-2 will replicate its dataset to just a single replica.

FalkorDB version 4 introduce a quick and efficient way of replicating queries between primary and its replicas.

Up until recently a WRITE query (which ran to completion and modified a graph) would be replicated as-is to all replicas, causing each replica to re-run the query, although such a replication schema is simple and straightforward it entails a number of issues:

1. Replicated query might fail due to insufficient resources or timeout.

2. Using time related or random functions within a query risks ending up with data discrepancy.

# Usage of time and randomness

MATCH (a), (b)

WHERE a.create < time() -100 AND b.id = tointeger(100 * rand())

CREATE (a)-[:R]->(b)

# Although some WRITE queries are short and quick to execute e.g.

CREATE (:Country {name:'Montenegro'})

# Others might include a long and costly read portions e.g.

MATCH (c:Country)

WITH average(c.area / c.population) as avg_density

MATCH (c:Country) WHERE c.area / c.population > avg_density

SET c.crowded = true

It would be a waste of time re-running write queries on the replicas, the primary DB had already done the hard work, it computed the “change-set” and so instead of sending the original query to its replicas the primary sends the query’s “effects”, an effect is a compact binary representation of a change e.g. connect node 5 to node 72 with a new edge, or update node 81 ‘score’ attribute to the value 4.

Replicating via effects solves the two problems we’ve mentioned earlier, in addition to saving the time spent computing what needs to be changed on the replicas.

Benchmarking

The benchmark tests three setups:

single Primary

Primary & Replica seperating reads from writes

Primary & 2 Replicas scaling out reads

Querying a graph with ~50M nodes and ~50M edges

# Creating the dataset;

CREATE INDEX FOR (p:Person) ON (p.id)

UNWIND range(0, 1000000) AS x CREATE (p:Person {id:x})

MATCH (p:Person) UNWIND range(0, toInteger(rand() * 100)) AS x CREATE (p)-[:CONNECTED]->(:Z)

# READ query:

MATCH (p:Person {id:$id})-[]->() return count(1)

# WRITE query:

MATCH (a:Person {id:$a_id}) CREATE (a)-[:CONNECTED]->(:Z)